Agentic security is an IAM problem in disguise

Most agentic security challenges are identity and access management in disguise. Learn how to treat AI agents as non-human identities with scoped credentials.

Most agentic security challenges are identity and access management in disguise. Learn how to treat AI agents as non-human identities with scoped credentials.

Most of what engineers are struggling with in agentic security isn't new. It's identity and access management, applied to agents.

That was the throughline at a Rocky Mountain AI Interest Group (RMAIIG) meetup this week, where the AI/ML Engineering subgroup worked through a presentation by Bill McIntyre, AI and machine learning architect, on securing AI agentic applications. The vocabulary kept shifting: guardrails, prompt hardening, container isolation. But the underlying question stayed the same: who is this agent, what is it allowed to do, do you have visibility into what it's doing, and how do you stop it when something goes wrong — and who is the human responsible for it.

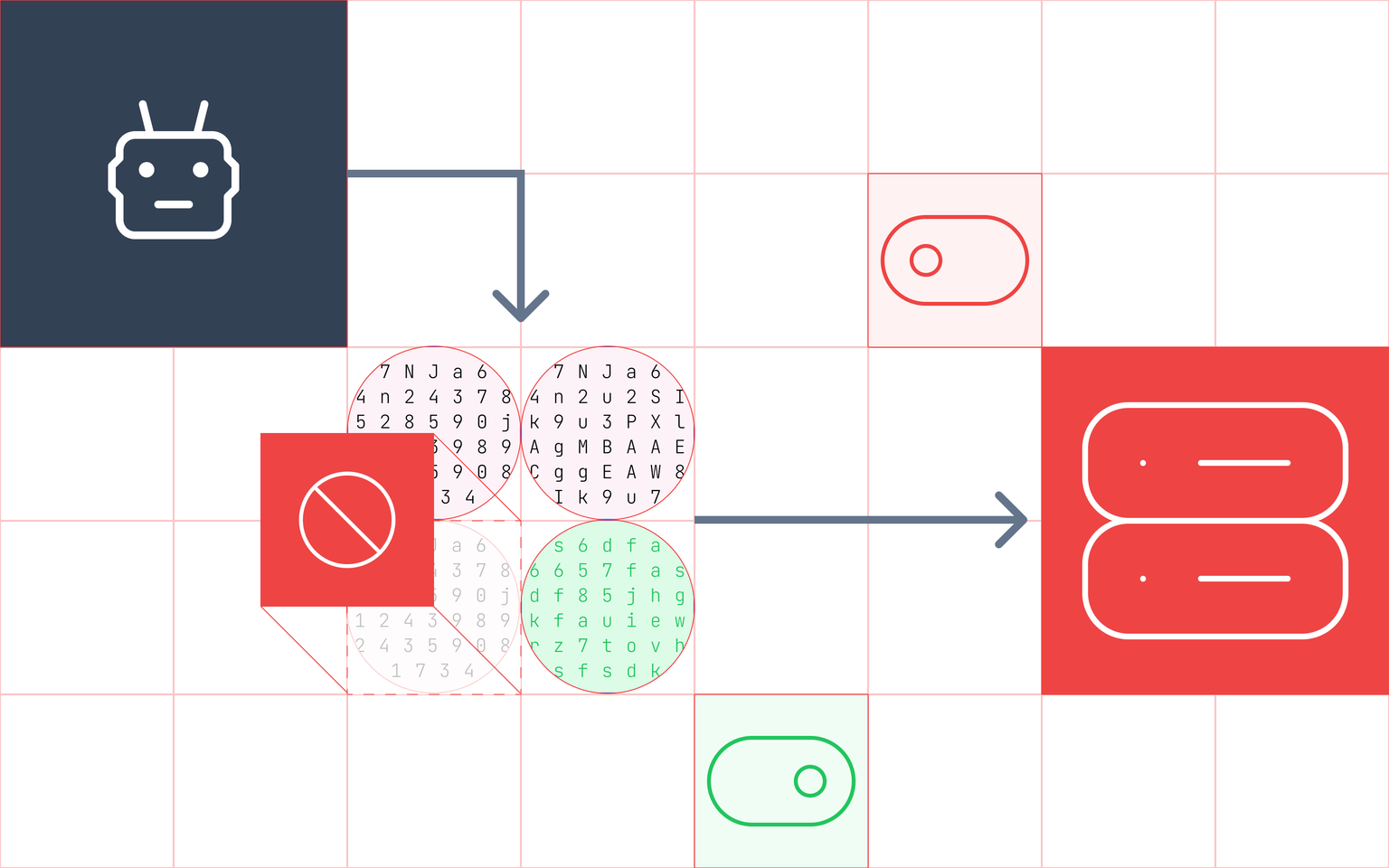

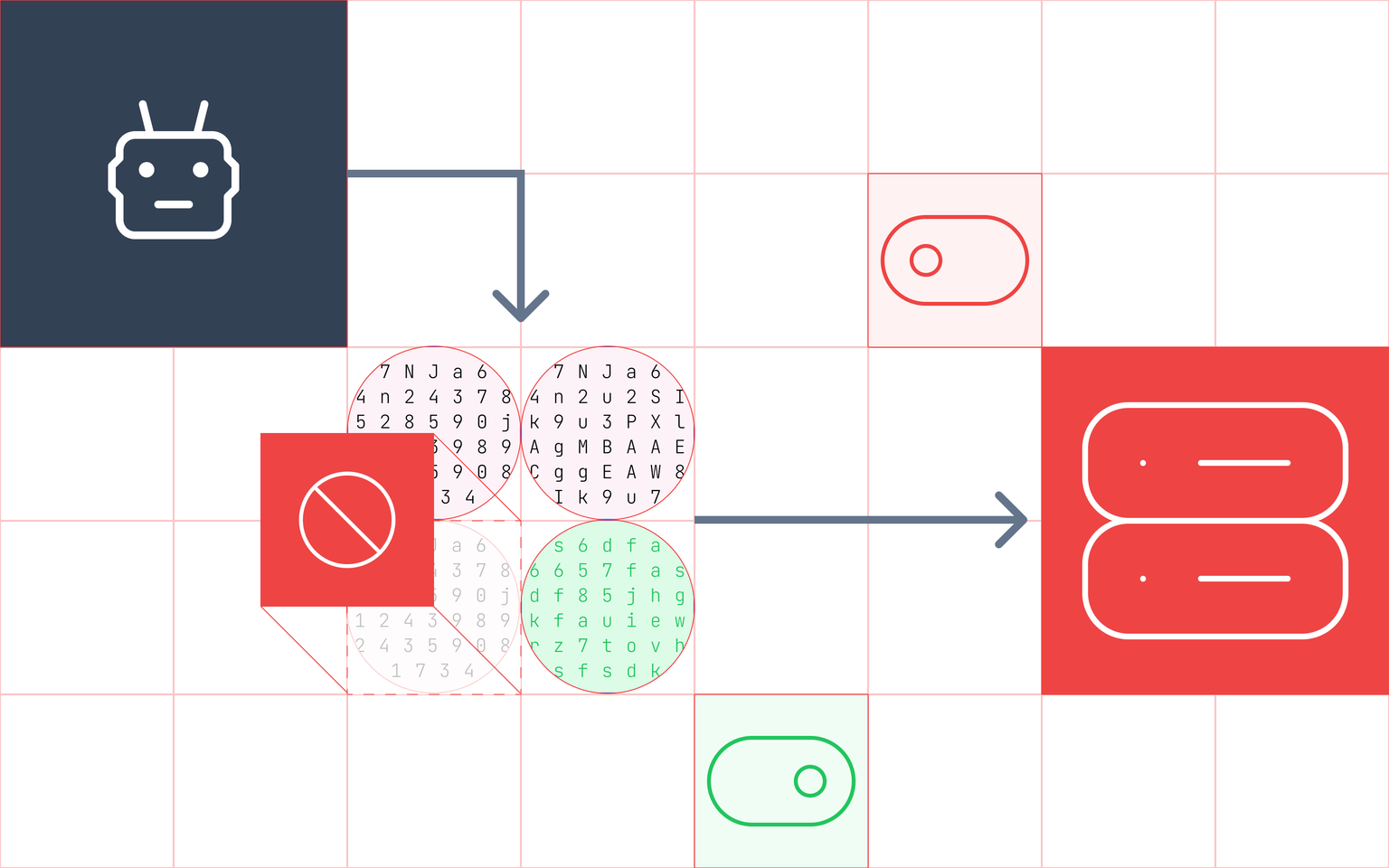

McIntyre's framing early in the deck is worth sitting with: in a traditional web app, bad input creates bad data. In an agentic app, bad input creates bad actions. The model doesn't just return wrong text. It calls tools, sends emails, reads files, and hits APIs. An agent becomes critically exploitable when it has access to private data, is exposed to untrusted content, and can communicate externally. Most production agentic apps meet all three conditions.

The core vulnerability is straightforward: LLMs can be tricked into doing things the user never asked them to do, if adversarial instructions are embedded in content the agent fetches. The missing egress filtering and lack of human checkpoints didn't cause that vulnerability, they just determined how far the damage spread once it was exploited. The injection problem and the infrastructure problem are distinct, and both need to be addressed.

The presentation breaks down three paths for injecting malicious content into RAG systems:

Vector-embedded RAG: the payload is embedded in a document that gets chunked and vectorized. It has to survive the embedding process while retaining enough semantic fidelity to influence retrieval which is difficult, but research shows it's achievable.

Full-text / direct: the entire document hits the context window intact. No transformation required. This is how most real-world attacks work: web pages, PDFs, emails, MCP tool responses all arrive unmodified.

Metadata and hidden fields: the payload hides where humans don't look but agents parse. PDF metadata, HTML comments, zero-width Unicode. It survives human review for the most part.

Authorization at the retrieval layer controls which agents can access which documents, not just which users, and is one important layer of defense. It limits blast radius. But it doesn't prevent injection; it constrains what a successfully injected agent can reach. That distinction matters when you're deciding where to invest.

Guardrails are authorization enforcement by another name. When an engineer says "I want to prevent the agent from accessing sensitive customer records," they're describing an authorization policy. When they say "I want to limit what the agent can send externally," that's egress control — a firewall rule and an authorization decision at once. The two conversations kept merging because it points to the underlying model for least privilege.

The group raised the case against rolling your own guardrails is the same as the case against building your own auth: the threat model shifts constantly. Use infrastructure that is purpose-built and continuously maintained.

If the prompt is the attack surface, then identity and authorization infrastructure needs to be the actual control plane that controls what an agent can call, write, or send, enforced at the infrastructure level rather than relying on what's in the context window.

A permission boundary enforced outside the model can't be overwritten by a malicious document. It would have to be bypassed. That's the architectural shift: identity security makes agent behavior predictable and auditable by design.

Agents need to be treated as non-human identities (NHI): entities that authenticate, hold scoped credentials, operate under defined permissions, and can have access revoked. Most teams aren't doing this. They're giving agents ambient, inherited access. And, that's why OWASP Agentic Applications Top 10 (released December 2025) lists "Identity & Privilege Abuse" as #3 specifically because of it.

The right model mirrors how human identities work in well-run organizations: agents authenticate against an IdP, receive credentials scoped to the minimum permissions required for the task, and that access can be revoked when something goes wrong. Federation handles the cross-system problem.

This is an area where Ory is actively building. Ory Hydra handles OAuth-based token issuance and revocation. The broader question of how to scope and enforce agent-level permissions across tools, APIs, and data sources is one the industry is still working out, and it's a problem that points back to the trusty principle of least privilege access (yes, pun intended).

For agent-to-agent communication specifically, the credential question gets more complex. Shared, static credentials are a liability when agents can be compromised through content injection. The right direction is short-lived, interaction-scoped credentials whether that's OAuth2 tokens, JWTs, or something like SPIFFE/SPIRE so that a compromised agent can't be used as a pivot point into the rest of the system.

The most forward-looking thread in the room was what happens when agentic systems scale to hundreds of agents operating in parallel. An emerging model is hierarchical: a small set of strategic agents (orchestrators, planners) receive delegated authority from a human operator and direct work to task-oriented agents (executors, specialists). The human manages the top tier; permissions and context flow downward.

The non-negotiable principle: there is always a human at the top of that chain who is accountable. Agents act; humans are responsible. No permission should exist in the system without a human owner who can be identified.

Bruce Schneier's line, quoted in the presentation, is the right frame: "Security is a process, not a product." IAM is foundational, but it doesn't replace runtime isolation, network segmentation, egress filtering, or anomaly detection. Each layer assumes the one before it has already failed. Identity is where you start, not where you stop.

The teams getting this right are treating agent identity as a first-class problem from day one, because retrofitting a control plane is much harder than building one.

If you're thinking through how Ory fits into your agentic architecture — token issuance, scoped credentials, ReBAC for agentic permissioning — we'd love to talk.